Setting up SAP Web Dispatcher with TLS via Step-Ca, Let's Encrypt and private CA

Github link to project

The setup of the SAP Web Dispatcher is in my own homelab connecting to the SAP S/4HANA trial docker container. I set it up using encryption with Step-CA, Let’s Encrypt and a private CA using openssl. As TLS is an increasingly important activity in my day job.

Following on from my post about setting up Gitea and the adventures into docker here https://www.rjruss.info/2024/11/docker-setting-up-gitea-with-postgres.html I use a similar template approach for the Web Dispatcher containers.

It got complicated via some cyber security reasons around storing passwords in clear text. Some security frameworks state that this issue is an instant fail/breach. And also my decision to keep the various containers into separate directories for the container definitions. They are all collected by a build script into one compose file.

The containers are as follows

Container Name | Description |

sap-webpriv-run | Web Dispatcher with Private CA using openssl |

sap-weblets-run | Web Dispatcher with Let’s encrypt |

sap-webstepacme-run | Web Dispatcher with Step-ca using ACME provisioner (requires port 443) |

sap-webstep-run | Web Dispatcher with Step-ca using token provisioner |

sap-step-run | Step-CA container used by sap-webstepacme-run and sap-webstep-run containers |

*All steps need to be executed by the root user unless otherwise stated

Step 1 setup local user and directory

Creating a dedicated docker user in this example dockeruser1 - if this is changed the .env file needs to be

adapted in step 2

The directory where the downloaded project is stored can be adapted as well.

The example uses /srv/docker-config

Example using the name sapwebdisp-stepca-letsencrypt-docker-main.zip

sudo su - useradd -m -s /bin/bash -u 1245 dockeruser1 mkdir /srv/docker-config cd /srv/docker-config

unzip {downloaded zip file} -d .

cd /srv/docker-config/sapwebdisp-stepca-letsencrypt-docker ./initialGitHubScript.sh

|

Step 2 Adapt the .env file

Change bold entries if required and ignore any other settings in the .env file

Local docker user and group needs to match the user created in step 1

#LOCAL_SETUP - used by first_setup.sh scripts LOCAL_DOCKER_USER=dockeruser1 LOCAL_DOCKER_GROUP=dockeruser1 # LOCAL_DOCKER_VOLUME_DIR directory will be created if it does not exist LOCAL_DOCKER_VOLUME_DIR=/docker-vol1 DOMAIN=rjruss.org BASE_HOST=hawtrial- STEP_HOST=${BASE_HOST}step SP_SHARED_ENV_GROUP=5501 SP_SHARED_GROUP_NAME=sharedenv … #SP_RCOUNT controls the how many days to check for certificate expiry SP_RCOUNT=2 #SP_RDAY is the day to check/renew the certificate and restart the web dispatcher SP_RDAY=Sunday #SP_RTIME is the time on SP_RDAY to do the checks SP_RTIME="07:30:00" #SP_RSLEEP is the sleep time in the endless loop between checks SP_RSLEEP=10m #Controls the private CA certificate duration SP_RENEW_VALID=8 #change the below SP_R* parameters for the private CA certificate details SP_ROOTC=GB SP_ROOTCA_VALID=1825 SP_EMAIL=rob.roosky@yahoo.com SP_ROOTCN="Robert Russell's ROOT CA" …. …. #SP_WEBSTEP_CERT_DUR need to be in hours{h} format e.g 48h #SP_WEBSTEP_CERT_DUR=48h SP_WEBSTEP_CERT_DUR=192h

WEBSTEP_HOST=${BASE_HOST}webstep WEBSTEP_ZABAP_SRCSRV=4300 WEBSTEP_HOST_PORT=4301 WEBSTEP_ZWEBADM_PORT=4302 SP_APP_WEBSTEP_VERSION=1 # WEBLETS_HOST=${BASE_HOST}weblets WEBLETS_ZABAP_SRCSRV=5400 WEBLETS_HOST_PORT=5401 WEBLETS_ZWEBADM_PORT=5402 SP_APP_WEBLETS_VERSION=1 # WEBPRIV_HOST=${BASE_HOST}webpriv WEBPRIV_ZABAP_SRCSRV=6500 WEBPRIV_HOST_PORT=6501 WEBPRIV_ZWEBADM_PORT=6502 SP_APP_WEBPRIV_VERSION=1 # WEBSTEPACME_HOST=${BASE_HOST}webstepacme WEBSTEPACME_ZABAP_SRCSRV=7500 WEBSTEPACME_HOST_PORT=7501 WEBSTEPACME_ZWEBADM_PORT=7502 #SP_WEBSTEPACME_CERT_DUR=48h SP_WEBSTEPACME_CERT_DUR=192h SP_APP_WEBSTEPACME_VERSION=1 # SP_TARGET_HOST=github.com SP_TARGET_PORT=443

|

The above would be allow the services to be accessed

Via the docker user

Adapt the dockeruser1 - group dockeruser1 is used by default

Via the shared group

Adapt the group id of 5501 a new group will be created with that gid

Via a new directory/volume (it will be created if it does not exist)

Adapt the /docker-vol1

Via the following domain, hostname and ports

rjruss.org = this needs to be adapted to the required domain

hawtrial-step = for step-ca hostname

hawtrial-webstep = web dispatcher with TLS via STEP-CA

hawtrial-webstepacme = web dispatcher with TLS via STEP-CA with ACME provisioner

hawtrial-weblets = web dispatcher with TLS via Let’s Encrypt

hawtrial-webpriv = web dispatcher with private CA with openssl

The parameters starting with SP_R* in the .env file control the certificate generation or private CA details. The SP_EMAIL is used in the private CA

setup and for the Let’s Encrypt container.

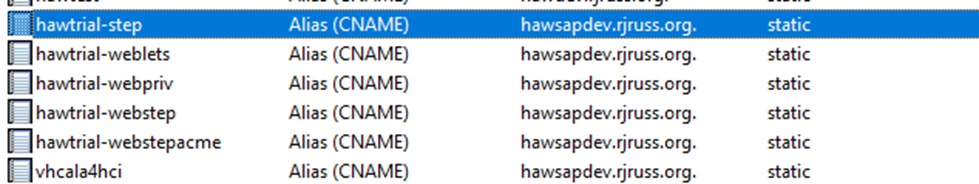

Setup DNS

DNS for hosts as shown, I use windows DNS server on my Hyper-V homelab |

|

Ports defined for web dispatcher with TLS via STEP-CA ABAP connection=4300 External connection example github.com=4301 Web Dispatcher Admin Port=4302 |

Ports defined for web dispatcher with TLS via STEP-CA with ACME provisioner ABAP connection=7500 External connection example github.com=7501 Web Dispatcher Admin Port=7502

|

Ports defined for web dispatcher with TLS via Let’s Encrypt ABAP connection=5400 External connection example github.com=5401 Web Dispatcher Admin Port=5402 |

Ports defined for web dispatcher with private CA with openssl ABAP connection=6500 External connection example github.com=6501 Web Dispatcher Admin Port=6502 |

Step 3 adapt the age related password files

The age (see step 4) command is used to store passwords in an encrypted file on a docker volume. These can be shared

between containers via the bash scripts in the base docker container build.

Update passwords contained in these .*.info files and they should be deleted ***after completing the step 4 process***

Edit the Let’s Encrypt related passwords for the cloudflare DNS access ./.LETS.info CLOUD_KEY="{>>>>>}" CLOUD_DOMAIN_ID="{>>>>>}"

Edit the Private CA password ./.PRIV.info CA_PW="testing!123"

Edit the STEP-CA password ./.STEP.info PW="testing!123"

Edit the Web Dispatcher admin password ./.WEBSTEP.info ADM_PW="etcT!1_"

|

Step 4 Create Volumes, Networks and encrypted passwords

Following script installs operating system pre-req packages, volumes, networks and encrypts the passwords.

After running the script the following volumes and network will be available.

# docker volume ls |grep sap

local sapwebdisp-stepca-docker_shared

local sp_sap_info_vol1

local sp_sap_keys_vol1

local sp_sap_step_vol1

local sp_sap_weblets_vol1

local sp_sap_webpriv_vol1

local sp_sap_webstep_vol1

local sp_sap_webstepacme_vol1

# docker network ls |grep sap

13c7ec216c25 sp-sap-net1 bridge local

Essential Setup for the ABAP system

Any ABAP system needs the MSSPORT setup. The following relies on this web dispatcher

project running on the same server as the SAP trial container; the new network sp-sap-net1, is required. Therefore a new running container of the SAP trial container needs to be started

with the appropriate options. The project has been tested on non trial 752 ABAP systems as

well and needs the profile parameter mentioned below set on any backend ABAP system.

We need a new bridging network as the containers are referenced by name (the default bridge

network cannot communicate by name -only via IP).

Run SAP Trial without message port | docker run --stop-timeout 3600 --sysctl kernel.shmmni=32768 -m 32g -it --network sp-sap-net1 --name a4h -h vhcala4hci -p 3200:3200 -p 3300:3300 -p 8443:8443 -p 30213:30213 -p 50000:50000 -p 50001:50001 -p 50002:50002 -p 50003:50003 sapse/abap-cloud-developer-trial:ABAPTRIAL_2022_SP01 -skip-limits-check |

Run SAP Trial with Message Server Port open | docker run --stop-timeout 3600 --sysctl kernel.shmmni=32768 -m 32g -it --network sp-sap-net1 --name a4h -h vhcala4hci -p 3200:3200 -p 3300:3300 -p 8443:8443 -p 30213:30213 -p 50000:50000 -p 50001:50001 -p 44601:44601 sapse/abap-cloud-developer-trial:ABAPTRIAL_2022_SP01 -skip-limits-check |

Replace the SSL server standard as it’s a basic 1024 key length and needs replacing |

|

Match the domain used in the .env file for the web dispatcher project |

|

Check the SSL Server is updated |

|

Add an HTTPS port to the message server on the SAP trial, an example script will provide the parameter required | #Copy the setupABAP_MSS_port.sh script to the a4h container and run it docker cp setupABAP_MSS_port.sh a4h:/tmp/setupABAP_MSS_port.sh docker exec a4h chmod +x /tmp/setupABAP_MSS_port.sh docker exec a4h chown a4hadm:sapsys /tmp/setupABAP_MSS_port.sh docker exec a4h su - a4hadm -c /tmp/setupABAP_MSS_port.sh

|

Output from the setupABAP_MSS_port.sh script should look like this

Add the following line to the /usr/sap/A4H/SYS/profile/A4H_ASCS01_vhcala4hci profile

ms/server_port_1 = PROT=HTTPS,PORT=44601,TIMEOUT=600,PROCTIMEOUT=60

You need to manually add that line to the profile

Example screenshot of profile file

Connect to the a4h container and restart the SAP system. Check the open message server ports. Run the two commands shown. Port 44601 should be available

| sapcontrol -nr 00 -function RestartSystem

sapcontrol -nr 01 -function GetSystemInstanceList

01.03.2025 09:52:50 GetSystemInstanceList OK hostname, instanceNr, httpPort, httpsPort, startPriority, features, dispstatus vhcala4hci, 0, 50013, 50014, 3, ABAP|GATEWAY|ICMAN|IGS, GRAY vhcala4hci, 1, 50113, 50114, 1, MESSAGESERVER|ENQUE, GREEN

Wait for both instances to go GREEN and system running, then

sapcontrol -nr 01 -function GetAccessPointList # 18.02.2025 08:46:02 GetAccessPointList OK address, port, protocol, processname, active 127.0.0.1, 3201, ENQUEUE, enq_server, Yes … 127.0.0.1, 3601, MS, msg_server, Yes 172.18.0.2, 3601, MS, msg_server, Yes … 127.0.0.1, 44601, HTTPS, msg_server, Yes 172.18.0.2, 44601, HTTPS, msg_server, Yes

|

Connect to the trial to the HTTPS 50001 and check the certificate reflects the domain |

|

Before building the web dispatcher check the network setup the HTTPS port 50001 has to work to proceed.

The 44601 can fail if the port is not open (connections will be made container to container over the docker

network.

Gamble on starting now if 44601 is not an open docker port for the running container as it should connect when starting on the same docker network. If you have opened this port then one of the 44601 connections should work. | ./offline-abap-check.sh No extensions in certificate 2025/02/16 07:33:57 : INFO : does not have key usage 'Certificate Sign' so guess/check if self signed : subject=C=GB, O=RJRUSS, CN=*.rjruss.org 2025/02/16 07:33:58 : INFO : Days till cert Expires 4701 2025/02/16 07:33:58 : INFO : Appears self signed so import =C=GB, O=RJRUSS, CN=*.rjruss.org so this will be used 2025/02/16 07:33:58 : INFO : ---vhcala4hci.rjruss.org:50001 Verify certificate chain at level 1 2025/02/16 07:34:28 : INFO : 0 ------ return code 2025/02/16 07:34:28 : INFO : INFO: successful connection to BB2 vhcala4hci.rjruss.org over HTTPS on PORT 50001 2025/02/16 07:34:28 : INFO : ---vhcala4hci.rjruss.org:50001 end of level 1--- 2025/02/16 07:34:28 : INFO : ---vhcala4hci.rjruss.org:50001 Verify certificate chain at level 5 2025/02/16 07:34:59 : INFO : 0 ------ return code 2025/02/16 07:34:59 : INFO : INFO: successful connection to BB2 vhcala4hci.rjruss.org over HTTPS on PORT 50001 2025/02/16 07:34:59 : INFO : ---vhcala4hci.rjruss.org:50001 end of level 5--- 2025/02/16 07:34:59 : INFO : ---vhcala4hci.rjruss.org:44601 Verify HTTPS connection 2025/02/16 07:34:59 : INFO : 7 ------ return code ** ignore if message server 44601 port not open on container 2025/02/16 07:34:59 : ERROR : Failed to validate connection to BB2 vhcala4hci.rjruss.org over HTTPS on PORT 44601 2025/02/16 07:34:59 : INFO : ---vhcala4hci.rjruss.org:44601 end of HTTPS connection check --- 2025/02/16 07:34:59 : INFO : ---vhcala4hci.rjruss.org:44601 Verify HTTPS connection 2025/02/16 07:34:59 : INFO : 7 ------ return code** ignore if message server 44601 port not open on container 2025/02/16 07:34:59 : ERROR : Failed to validate connection to BB2 vhcala4hci.rjruss.org over HTTPS on PORT 44601 2025/02/16 07:34:59 : INFO : ---vhcala4hci.rjruss.org:44601 end of HTTPS connection check --- /tmp/tmp.cyq4arPhtb

|

**** delete these password files ****ENSURE YOU KNOW THE PASSWORDS before deleting****

ls -a .*.info

rm -rf .LETS.info .PRIV.info .STEP.info .TEST.info .WEBSTEP.info

|

Let’s Encrypt Checks

The start script in docker file sp-sap-weblets-build-split-docker is commented out.

If you have Cloudflare and have entered the cloudflare key security details in the .LETS.info then uncomment this line. Otherwise the Let’s Encrypt container will start but not do anything.

#CMD [ "/usr/bin/bash", "-c", "/home/dwdadm/startup/start-weblets.sh"] |

Step 5 build and run it

The script “build-it-and-run-it.sh” is setup to create a combined compose file for the individual dockerfiles.

Support Package SAP WEB DISPATCHER 7.54 Linux on x86_64 64bit

You need to copy the SAP files to the subdirectory ./sp-sap-image/bin

“Error: missing SAP web dispatcher SAR file or SAPCAR file, exiting”

https://softwaredownloads.sap.com/file/0020000000498472024

https://softwaredownloads.sap.com/file/0020000001532182023

Files should be downloaded and named as follows

sp-sap-image/bin/SAPCAR

sp-sap-image/bin/SAPWEBDISP_SP_235-80007304.SAR

su - dockeruser1 cd /srv/docker-config/sapwebdisp-stepca-letsencrypt-docker ./build-it-and-run-it.sh

|

If the SAPCAR and SAPWEBDISP_SP_235-80007304.SAR files are missing the script will prompt for an

S-ID and password to download the files to the correct directory.

Step 6 Setup host windows/client computer

Install step-ca root certificate on host windows

Run this script to get the powershell commands to add the certificates required to the certificate store.

Also shows the relevant URLs to access the various web dispatchers

Example output

$B64="-----BEGIN CERTIFICATE----- …. …. …. -----END CERTIFICATE-----" $B64 | Out-File .\WEBPRIV.crt Import-Certificate -FilePath .\WEBPRIV.crt -CertStoreLocation cert:\CurrentUser\Root

|

From the output there are two sections to import the certificates for the private and step based TLS authorities.

Let’s encrypt is already a trusted certificate and no further action is required to trust that certificate.

Example output of URLs

-WEBLETS_HOSTs Web dispatcher link for github.com https://hawtrial-weblets.rjruss.org:5401 Web dispatcher link to a4h https://hawtrial-weblets.rjruss.org:5400 --- -WEBPRIV_HOSTs Web dispatcher link for github.com https://hawtrial-webpriv.rjruss.org:6501 Web dispatcher link to a4h https://hawtrial-webpriv.rjruss.org:6500 --- -WEBSTEPACME_HOSTs Web dispatcher link for github.com https://hawtrial-webstepacme.rjruss.org:7501 Web dispatcher link to a4h https://hawtrial-webstepacme.rjruss.org:7500 --- -WEBSTEP_HOSTs Web dispatcher link for github.com https://hawtrial-webstep.rjruss.org:4301 Web dispatcher link to a4h https://hawtrial-webstep.rjruss.org:4300 ---

|

*** add suffix for the a4h urls for the Fiori Launchpad otherwise it's a 404 not found link (but a successful

TLS connection).

/sap/bc/ui5_ui5/ui2/ushell/shells/abap/FioriLaunchpad.html

E.g. for the Let’s Encrypt example web dispatcher

https://hawtrial-weblets.rjruss.org:5400/sap/bc/ui5_ui5/ui2/ushell/shells/abap/FioriLaunchpad.html

Operation Information

The docker control scripts are setup to renew the certificates every week (apart from Let’s Encrypt which

has a longer certificate life). To alter the process there is a control script to alter the day, sleep time for the loop,

certificate expiry length and expiry check.

By default the script sleeps at a set interval and “wakes” up to check certificates/renew on Sunday at 07:30am.

In the .env settings this is controlled by the following variables.

SP_RDAY=Sunday

SP_RTIME="07:30:00"

By default the certificates valid life is set to 8 days, controlled by

SP_RENEW_VALID=8

The expiry check is set to 2 days, if the certificate has less than 2 days before it expires it will be renewed.

It is controlled by

SP_RCOUNT=2

These values can be changed by the “sleep-control-all.sh” script for all containers

The following will change the wake up time to Tuesday at 07:40 am. Alter the expiry check to 1 day. The certificate life to 2 days and sleep interval to 2 minutes.

** The script is still set to check once a week. E.g if Tuesday was 4th March, the next automated wake up

would be the 11th March. To have a more aggressive wake up schedule the sleep-control-all.sh needs to be

on a regular schedule.

**Let’s Encrypt certificate life is not altered by these scripts as Let’s Encrypt issues the certificates.**

./sleep-control-all.sh change_check_days_to_expire 1 ./sleep-control-all.sh change Tuesday 07:40 ./sleep-control-all.sh sleep_interval 2m ./sleep-control-all.sh renew_certs_days_length 2 ./sleep-control-all.sh display

|

Miscellaneous actions

Remove demo certs from certstore, from the example code only - if the root CA name is changed then the *basestep* match won't match any…..

Get-ChildItem -Path Cert:\CurrentUser\Root | Where-Object { $_.Subject -like '*basestep*' } | ForEach-Object { certutil -user -delstore Root $_.Thumbprint }

|

To remove the private CA it depends on the SP_ROOTCN value. By default it is,

SP_ROOTCN="Robert Russell ROOT CA"

To delete these change the “like” search

Get-ChildItem -Path Cert:\CurrentUser\Root | Where-Object { $_.Subject -like '*Robert Russell*' } | ForEach-Object { certutil -user -delstore Root $_.Thumbprint } |

HSTS web dispatcher Issues

HTTP Strict Transport Security (HSTS) Error message example

“You cannot visit hawsap-webstepacme.rjruss.org right now because the website uses HSTS.”

3359291 - Configuring HSTS with Web Dispatcher or ICM

2202116 - Support of HTTP Strict Transport Security

Parameter: icm/HTTP/strict_transport_security